Most website outages don’t begin with some massive attack or total server meltdown. More often, they start out with a whisper, a memory leak surfaces, response times lag and by the time anyone pays attention visitors have exited stage left, revenue has vaporized and trust is in tatters. The silver lining, though, is that many of these errors are avoidable and therein lies how AI enhanced website error detection is revolutionizing modern web operations.

Whereas classic monitoring tools depend on fixed thresholds to alert you, AI is able to learn your individual website’s normal activities and spot strange behavior before it grows into a full on catastrophe. With the anomaly detection, predictive analytics,intelligent log analytic methods and faster root cause discovery migration from (in reactive sense) firefighting to proactive prevention of issues is seen by organizations. However, from 2025 onwards, this is no longer an advanced feature but rather a necessity for any website that has to be fast, stable and reliable.

Key Takeaways

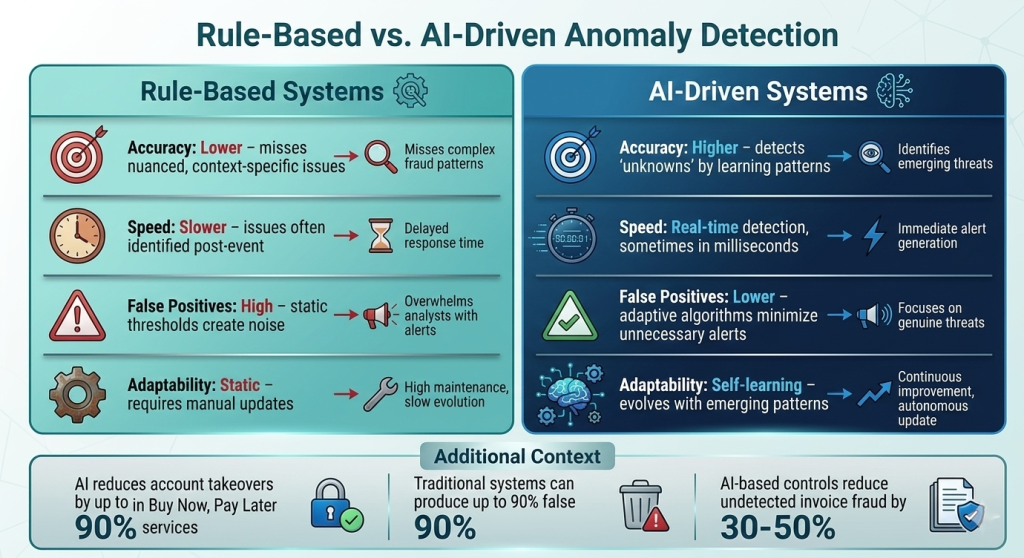

- We have seen that static thresholds can miss anomaly patterns. They can often result in false positives as well (spikes due to seasonal traffic changes, etc.). This is why AI-based methods are known to work well for anomaly detection. This improves on the false positives prevention and gets to the real issues sooner.

- The change is predictive error prevention, a new paradigm that makes new types of web operations possible. After analyzing historical resource trends, AI can predict failures before users notice any downtime or lag.

- Root cause analysis and remediation powered by AI now scale well. It can follow incidents through dependency chains and automatically address common problems before they break out.

- Over time, security and performance monitoring have become one discipline. But that same behavioral AI that is good at simultaneously detecting and observing systems or application anomalies over time, can equally spot threats like credential stuffing, DDoS activity and script injection.

- It is important to understand that opting for an AI-integrated hosting provider like UltaHost is no longer only about speed. It also builds resiliency, since better AI infrastructure means greater protection from failures and emerging security threats.

Ready to power your Website?

Power your website with smarter, AI-driven hosting from UltaHost.

How AI-Powered Anomaly Detection Actually Works

To understand what AI-based website error detection can do, we need to explain one fundamental concept: baselining. In layman’s terms, through historical data AI understands what normal website behavior looks like. These types of metrics include response times, error rates, CPU usage, memory load and database latency. It can then detect unusual deviations in that pattern much more quickly.

Different AI models also deal with different types of anomalies:

- Time based patterns like traffic flow, server response times etc. are captured in ARIMA and LSTM models.

- Similarly to anomalies, autoencoders and VAEs are useful for detecting unexpected behaviours on complex data: such as API calls or user sessions.

- On the other hand, SVMs are able to discriminate between normal and abnormal activity if patterns are more difficult to determine.

So, while monitoring AI generates fewer false positives than a static threshold tool. In layman’s terms, it understands context better. A traffic surge at noon might be expected, while the same spike at 3 AM could raise a red flag.

The even bigger deal, however, is that AI can bridge problems throughout your whole stack. This can be a slow database query, which increases response times and forms request queues and thus consumes memory until it crashes a service.

- The database slows down.

- The application response time rises.

- Number of Queued Requests and memory increase.

- The service may fail.

Conventional tools tend to issue separate alerts for each symptom. AI driven platforms package them into a single, clear incident and frequently identify the root cause. For teams responsible for microservices, this makes the difference between a quick fix and hours of recreation.

From Reactive to Predictive: The Paradigm Shift in Website Error Prevention

Website reliability has gone past just responding/repairing issues that crop up. The shift is not in measuring failures after the fact, but preventing them before users ever experience them.” This is why proactive AI-backed monitoring is replacing reactive approaches: instead of waiting for alerts after something goes wrong, predictive systems detect early trends, predict risk and help teams to react before performance degradation or outages occur.

AIOps platforms enable this transition with:

- System baselines are created dynamically based on past data

- Detecting minor discrepancies before they escalate into significant faults

- Connecting related signals to reduce alert noise

- Automating solutions for common and well-known problems

- Improving future predictions based on the lessons of past incidents

This has technical and business implications. AI-based anomaly detection has been known to decrease mean time to detect major incidents by 7.5 minutes (greater than average incident times) and cover 63% of major incidents. So for websites where even a small amount of downtime can translate to lost revenue and a negative user experience, that improvement is important.

| Predictive error prevention is much more than a better set of tools. Instead, it is a more intelligent and proactive approach to safeguarding uptime, performance, and customer trust. |

AI in Log Analysis, Root Cause Identification, and Automated Remediation

The beauty of AI-driven log analysis is that it makes website troubleshooting faster and infinitely less painful. After all enterprise systems produce large amounts of logs, metrics and traces every day, manually sifting through to determine root cause of a sporadic 502 error is slow-moving, tiresome work that’s easy to botch. In contrast, AI analyzes telemetry in real time and clusters similar log patterns, allowing it to put a flag on anomalous behavior early — even silent failures that traditional monitoring might miss.

AI-powered root cause analysis also helps engineers move from guesswork to clarity. Instead of beginning every incident from square one, the teams have a structured look at what happened, where it started and which services are impacted. Key capabilities include:

- Correlation of metrics, linking symptoms to probable underlying events

- A topology aware analytic that maps problems across live service dependencies

- Natural language summaries that explain the incident by converting raw telemetry

- Predictive connections that identify risky upstream behavior before it cascades

More importantly still, AI is now moving into automated remediation. For instance, it can restart services that fail, rebalance resources when memory begins to run low or revert updates that appear to be malfunctioning before they cause broader disruption.

| This is about more than speeding up troubleshooting. Instead, it is a more intelligent approach to reducing alert fatigue and alleviating operational pressure while tailoring a hosting environment that will harden over time. |

AI Powered Security: Detecting and Preventing Website Threats in Real Time

Website error prevention and website security have become very closely related. It is because a lot of the most damaging “errors” a site suffers are really just attacks. As threats become more sophisticated, we can no longer rely on traditional rules-based tools. AI-powered systems, on the other hand, target behavior to help teams identify abnormal activities including credential stuffing, SQL injection attempts, malware and suspicious traffic patterns before they evolve into bigger incidents.

Key capabilities include:

- Traffic Analysis in real time to differentiate legitimate traffic spikes from DDOS attack behavior

- User and entity behavior analytics that identify suspicious sessions, account takeovers and insider threats

- Recognizes malware according to actual behavior, not just signatures

- Early detection of malicious third-party scripts via JavaScript injection.

- Regular validating of access controls, plugin configurations and SSL/TLS status to identify misconfigurations before they can be exploited

As importantly, security monitoring and performance monitoring have now converged. The same artificial intelligence systems that identify memory leaks can also monitor login failures or the signs of exfiltration of data. Unified observability platforms allow teams to see website health more clearly and holistically in one place.

| This change enables teams to break out of silos and react sooner. Instead, it makes for a more intelligent and robust way of defending website performance, stability, and security. |

How UltaHost Leverages AI to Deliver Reliable, Error-Free Hosting

Through AI, UltaHost is able to provide hosting that is more dependable, secure and proactive. Instead of relying solely on the strength of its hardware and network performance. Its infrastructure employs intelligent monitoring, predictive error detection, and automated remediation to identify issues before they impact websites. In practical terms, this translates into hosted sites having access to real-time monitoring capabilities that cover aspects of CPU, RAM, bandwidth, and disk I/O being dynamically monitored as well predictive traffic scaling ahead of demand hitting the site in addition to behavioral intrusion detection that blocks DDoS activity and brute-force attacks at the earliest possible stage.

Key benefits include:

- Greater uptime through fast detection and remediation of issues before they turn into outages

- Faster MTTR as it gives support teams a better starting point with AI-assisted root cause analysis

- Aggressive security via behavioral detection that catches threats static tools miss

- Reduced operational overhead where automation takes care of common problems like plugin conflicts, certificate renewals and configuration drift

A key element underpinning this approach is the continuous learning pipeline behind it. Instead of using outdated techniques or stale snapshots, the AI models used by UltaHost continue to learn constantly, whether that be new traffic patterns, attack vectors, changing website behavior. That is ultimately what makes AI native hosting more resilient: it doesn’t just monitor for problems, it learns how to avoid them.

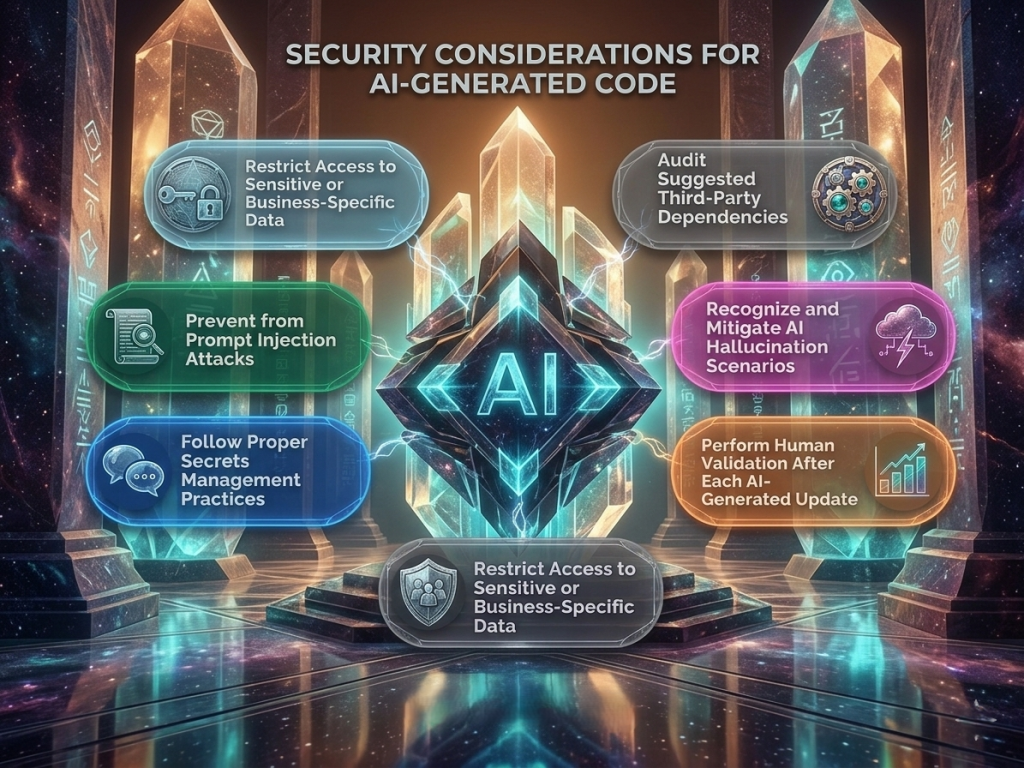

Implementing AI-Based Error Detection: Technical Considerations and Best Practices

AI error detection implementation starts with observability. After all, anomaly detection can only be as reliable as the telemetry it sees, so you need wide coverage across logs, metrics and distributed traces in your stack. And without that foundation, even sophisticated AI monitoring will leave gaps where failures can fester in darkness. Equally important, teams must select the detection model. The best matches their environment statistics baseline, machine learning model and topology aware causal AI solve different types of reliability problems with varying degrees of accuracy, training requirements and deployment complexity.

Practical implementation best practices include:

- Instrument applications using OpenTelemetry to standardize vendor-agnostic telemetry across services

- Define clear SLOs (Service Level Objectives) so that anomaly detection has a means of measuring against a baseline of acceptable performance.

- Enrich signal quality for on call teams with multilayered alerting and AI noise reduction

- Use AI-generated insights as an investigative point of departure rather than a conclusion, because human validation is still key

- Create feedback loops wherein engineers can tag false positives and confirmed detections, tuning model precision over time

- You should run chaos engineering exercises to find out if the monitoring system. It can really detect the fault scenarios that matter most.

From an operational resilience point of view:

Do not consider AI monitoring to be an add-on; treat it as part of your fundamental reliability architecture, because predictive visibility is only valuable as the telemetry pipeline that enables it.

Don’t let slow hosting hold your website back

Stay ahead of downtime and threats with UltaHost’s intelligent hosting infrastructure.

Final Thoughts

Gone are the days when AI driven website error detection and prevention was a niche experiment. It has become something that modern web operations cannot afford to be without. As web sites grow in sophistication, attack surfaces widen and uptime expectations increase. Organizations that adopt intelligent, continuously learning monitoring will be best prepared to prevent failures of all types while decreasing MTTR overall, increasing security and enhancing user experience.

Ultimately, the true winners will be those that took AI-driven observability early as resilience on a widespread basis within the next few years won’t solely hinge on infrastructure performance but rather how intelligently you’re able to detect and contextualize problems before they morph into major incidents.

FAQs

What is AI-based website error detection and how does it work?

Machine learning is used in AI-based website error detection to learn typical website behavior concerning logs, metrics, trace configurations, CPU usage, memory load with respect to response time and database latency. That means it can spot unusual patterns earlier than regular monitoring and potentially prevent outages before users are aware of them.

Why is AI anomaly detection better than static threshold monitoring?

Sometimes context-specific problems may be overlooked or false positives will arise due to static threshold monitoring. AI anomaly detection, on the other hand, creates dynamic baselines around actual traffic patterns, seasonality and workload behavior allowing teams to catch real incidents more quickly with less alert noise.

How does AI improve root cause analysis for website performance issues?

By correlating all telemetry signals, tracing service dependencies and grouping similar symptoms into a single incident view, AI enhances root cause analysis. As a result, engineers can quickly pinpoint the probable source of 502 errors, slow database queries or memory leaks.

Can AI help prevent website security threats as well as technical errors?

Yes. AI-enabled monitoring enables us to identify suspicious behaviors like credential stuffing, DDoS activity, malware behavior, SQL injection attempts and JavaScript injection. Hence, it fortifies the reliability of websites as well as the real-time security protection.

What should businesses look for in an AI-powered hosting provider?

Businesses need to seek out robust observability coverage, predictive monitoring, behavioral threat detection as well as automated remediation, continuous model learning (on the newly coming into the system data), and MTTR over time. So, in that sense an AI-integrated hosting provider like UltaHost provides more than just hosting performance it brings resilience, security and operational efficiency.